Google has announced Gemini 3.1 Pro, the latest upgrade in its Gemini model family. While framed as a performance improvement, the release reflects a deeper shift in the AI industry: labs are now competing less on fluency and more on structured reasoning.

The model is being deployed across the Gemini app, Vertex AI, and NotebookLM, signaling Google’s strategy to unify consumer, developer, and enterprise AI experiences around a stronger reasoning core.

This isn’t just a model update.

It’s a directional signal.

What Does 77.1% on ARC-AGI-2 Actually Mean?

Google reports that Gemini 3.1 Pro achieved 77.1% on ARC-AGI-2, a benchmark designed to evaluate abstract reasoning and generalization.

Unlike traditional NLP benchmarks that measure language fluency, ARC-style evaluations test:

- Pattern abstraction

- Novel task generalization

- Multi-step logic

- Symbolic reasoning

In simple terms, ARC doesn’t ask, “Can the model write well?”

It asks, “Can the model think through unfamiliar problems?”

If independently validated, doubling performance over the previous Gemini version suggests architectural improvements — not just prompt tuning.

However, benchmark gains do not automatically equal real-world robustness. Enterprise value depends on reliability under unpredictable conditions.

Benchmarks are indicators. Production stability is proof.

Why Reasoning Is Becoming the Real Battleground

Over the last two years, AI competition largely revolved around:

- Creative writing

- Conversational quality

- Multimodal generation

- Personality and tone

That era is stabilizing.

The new competitive frontier is:

- Logical abstraction

- Tool invocation accuracy

- Agentic orchestration

- Multi-step planning

- Deterministic output behavior

Enterprises don’t prioritize novelty. They prioritize correctness.

Higher-order reasoning directly impacts:

- Financial analysis

- Legal summarization

- Code generation

- Scientific modeling

- Structured workflow automation

If Gemini 3.1 Pro truly strengthens reasoning, it aligns tightly with enterprise demand.

SVG Generation and Code-Based Outputs: A Practical Signal

One practical capability highlighted in the release is code-generated animated SVG output.

This is significant.

SVG (Scalable Vector Graphics):

- Scales infinitely without pixel degradation

- Maintains small file size

- Integrates directly into web applications

- Enables dynamic, code-driven animation

Unlike raster image generation, SVG generation blends reasoning with structured output.

It’s not just “generate a pretty image.”

It’s “generate usable front-end code.”

This positions Gemini not merely as a chatbot, but as a developer-facing productivity engine.

Ecosystem Integration: Google’s Strategic Advantage

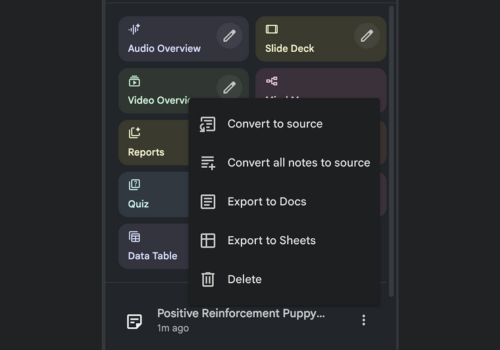

Gemini 3.1 Pro isn’t confined to a single interface.

It’s rolling out across:

- Consumer-facing AI assistants

- Developer APIs via Vertex AI

- Research workflows in NotebookLM

- Cloud-based enterprise infrastructure

This coordinated deployment reflects Google’s long-standing advantage: vertical integration.

Rather than isolating AI into standalone apps, Google embeds it directly into:

- Search-adjacent experiences

- Cloud compute environments

- Research documentation tools

- Developer ecosystems

That reduces friction and accelerates adoption.

AI becomes part of the infrastructure — not a parallel system.

The Broader Industry Context

The release arrives during intensified competition in:

- Reasoning benchmarks

- Agent frameworks

- Enterprise deployment reliability

- Developer adoption velocity

Across major labs, the emphasis is shifting toward:

- Lower hallucination rates

- Higher deterministic consistency

- More reliable tool usage

- Scalable deployment architectures

In 2026, AI leadership is unlikely to be measured by parameter count alone.

It will be measured by:

- Logical reliability

- Integration maturity

- Operational stability

Gemini 3.1 Pro is Google’s bid in that recalibrated arena.

The Real Question: Does It Scale Beyond Benchmarks?

ARC-AGI-2 performance is impressive — if validated.

But the real test lies in:

- Long-context reasoning reliability

- Multi-agent orchestration

- Tool chaining stability

- Enterprise-grade uptime and monitoring

Higher reasoning accuracy must translate into:

- Fewer hallucinations

- Better structured outputs

- Stronger agent workflows

- More predictable behavior under edge cases

That’s where market leadership will be decided.

Final Takeaway

Gemini 3.1 Pro signals a clear strategic pivot toward structured reasoning as the core differentiator in advanced AI systems.

Less emphasis on flashy generative novelty.

More emphasis on abstraction, logic, and production reliability.

If benchmark gains carry over into real-world applications, Google strengthens its position in both enterprise AI and developer ecosystems.

But as always in AI, benchmarks open the conversation.

Deployment stability determines the outcome.